Oct - Dec 2017 //

A Forest in the Desert

Design / 3D Content / WebVR / A-Frame

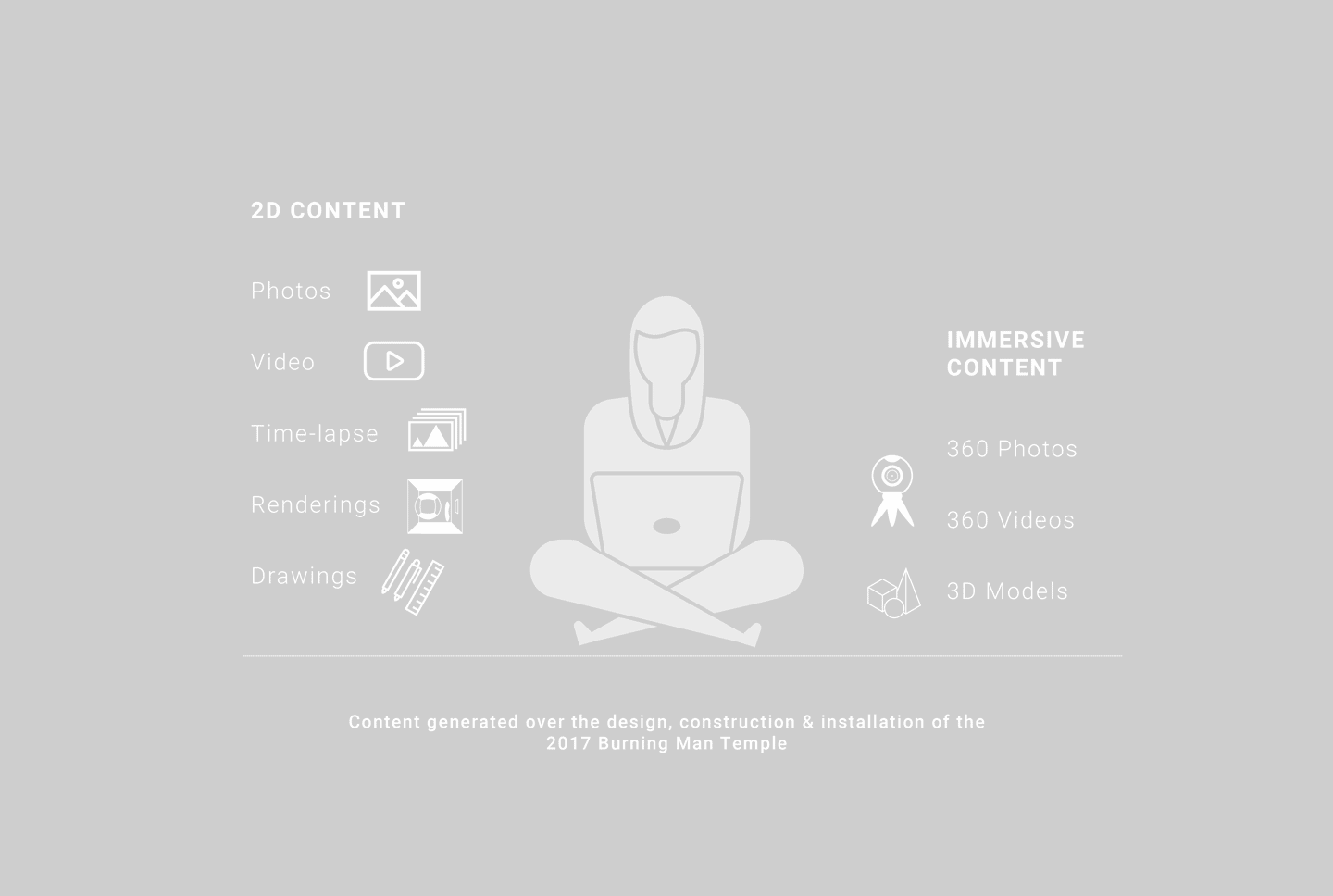

A Forest in the Desert is an open source VR prototype for sharing immersive content, which uses the content generated over the design & installation of the 2017 Burning Man Temple. It was built with A-Frame and works in both a web interface and in VR with 3DoF/6DoF controllers.

In collaboration with John Faichney.

View Prototype

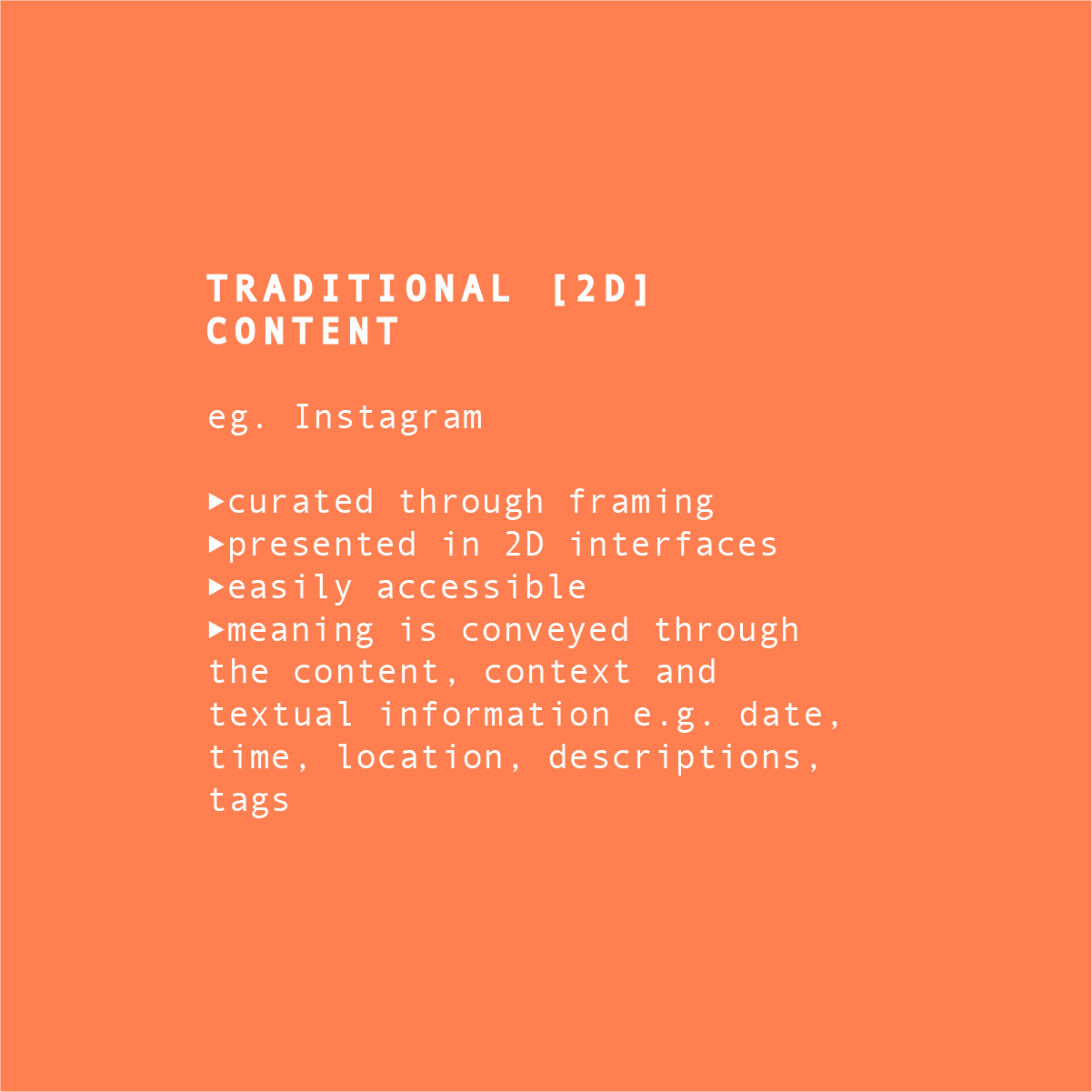

Sharing traditional (2D) content online is easy

So I’m a serial ‘documenter' ie. ALWAYS taking photos, videos etc. According to Business Insider, people took an estimated 1.2 trillion digital photos alone in 2017. Of these, we have approx. 3.2 billion photos/videos being shared each day on social media, a phenomenon that speaks to the relative ease at which traditional user generated content can be shared and consumed.

Sharing immersive content online in a meaningful way is challenging

I worked on an art installation for the greater part of 2017 and found myself amassing content at a rapid pace. This culminated with a month’s worth of 360s that I was exctied to share, as they offered the most unfiltered view of the project. After researching into ways to present this online, I realized that no existing platform would adequately tell the story.

Learn more about the Temple Art Installation >>>

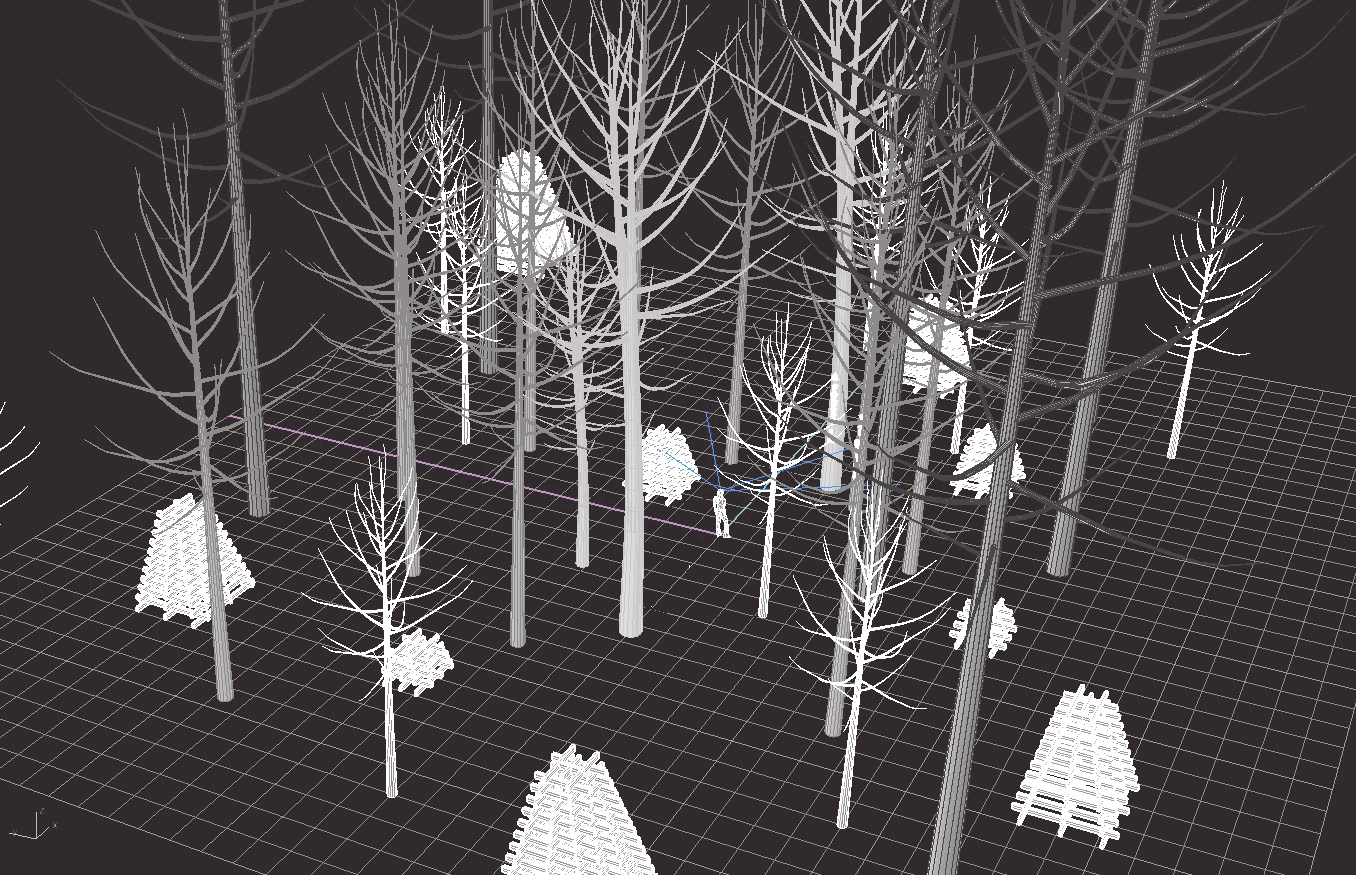

Prototype - A Forest in the Desert

A Forest in the Desert is a prototype that I designed and developed in response to these challenges. I worked with my partner John Faichney, a full stack developer who built about 40% of the prototype and helped me map out the application architecture. The design / build for version 1.0 of the prototype took approx 3 months. Check out the demo video below, test out the prototype yourself, and read on if you're interested in my design process.

Test out the A-Frame prototype in your browser >>>

Design Goals

The project began with a goal setting exercise intended to serve as a guide through the whole design process and form the base criteria for evaluation.

Provide a meaningful experience through contextually relating content

Allow users to discover content organically

Make it accessible to the largest audience possible

I started by trying to better understand how we experience traditional (2D) content VS immersive content.

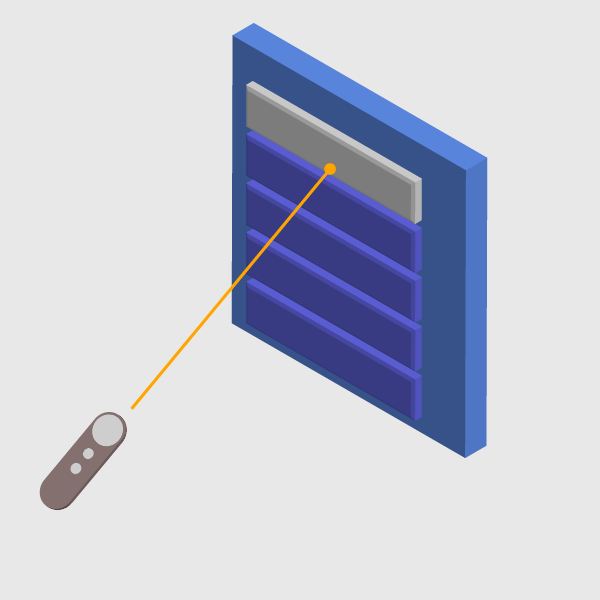

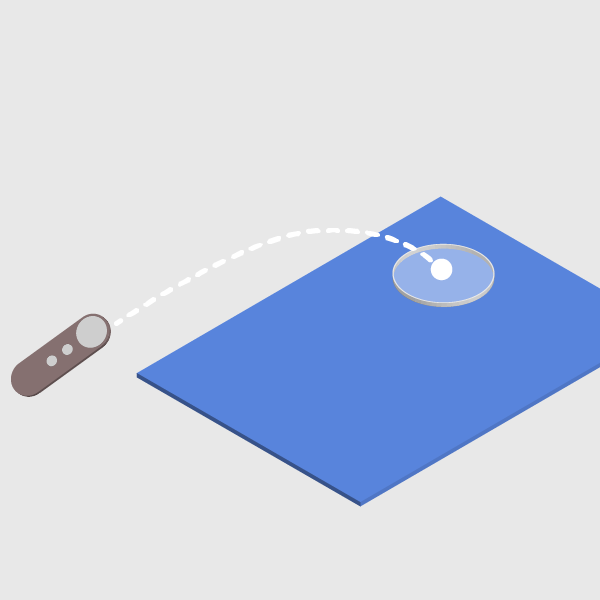

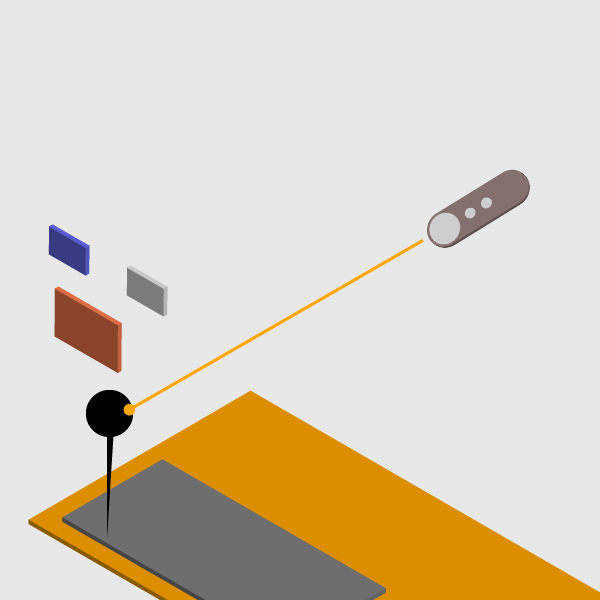

I also identified existing interaction and design patterns that supported my design goals

Navigation

3D Menu

Information

3D Panels

Locomotion

Teleportation

Navigation

Markers

Establishing a look & feel for the design helped me ensure visual consistency across the experience

3D 'architectural' elements

Contrasting lines / layering

Natural tones

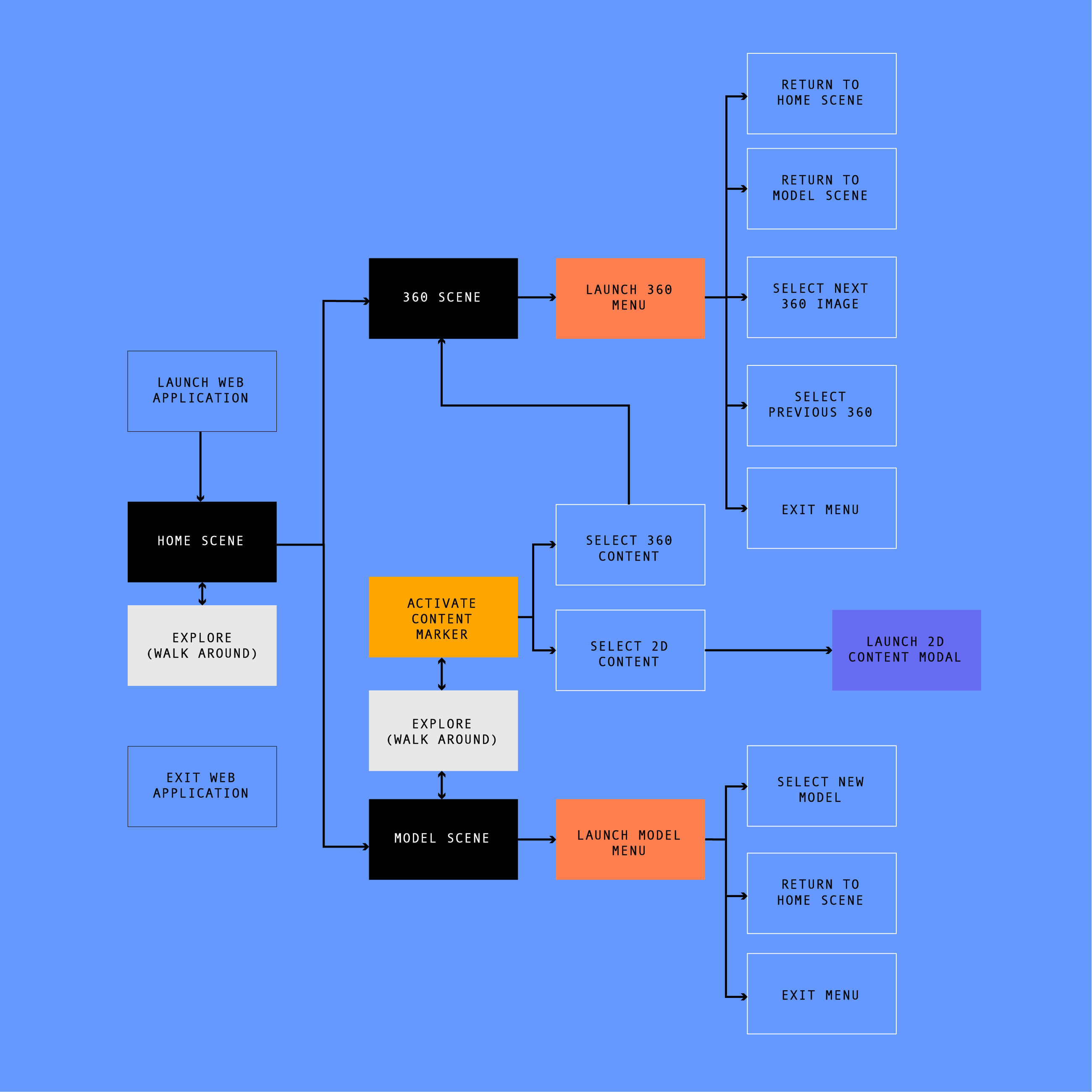

Defining a typical user flow helped me sequence the experience, curate functionality and ensure that only relavent information was being presented.

Meeting design goals

During the prototyping process, my design goals helped me prioritize features, evaluate alternatives, and find the best design solution.

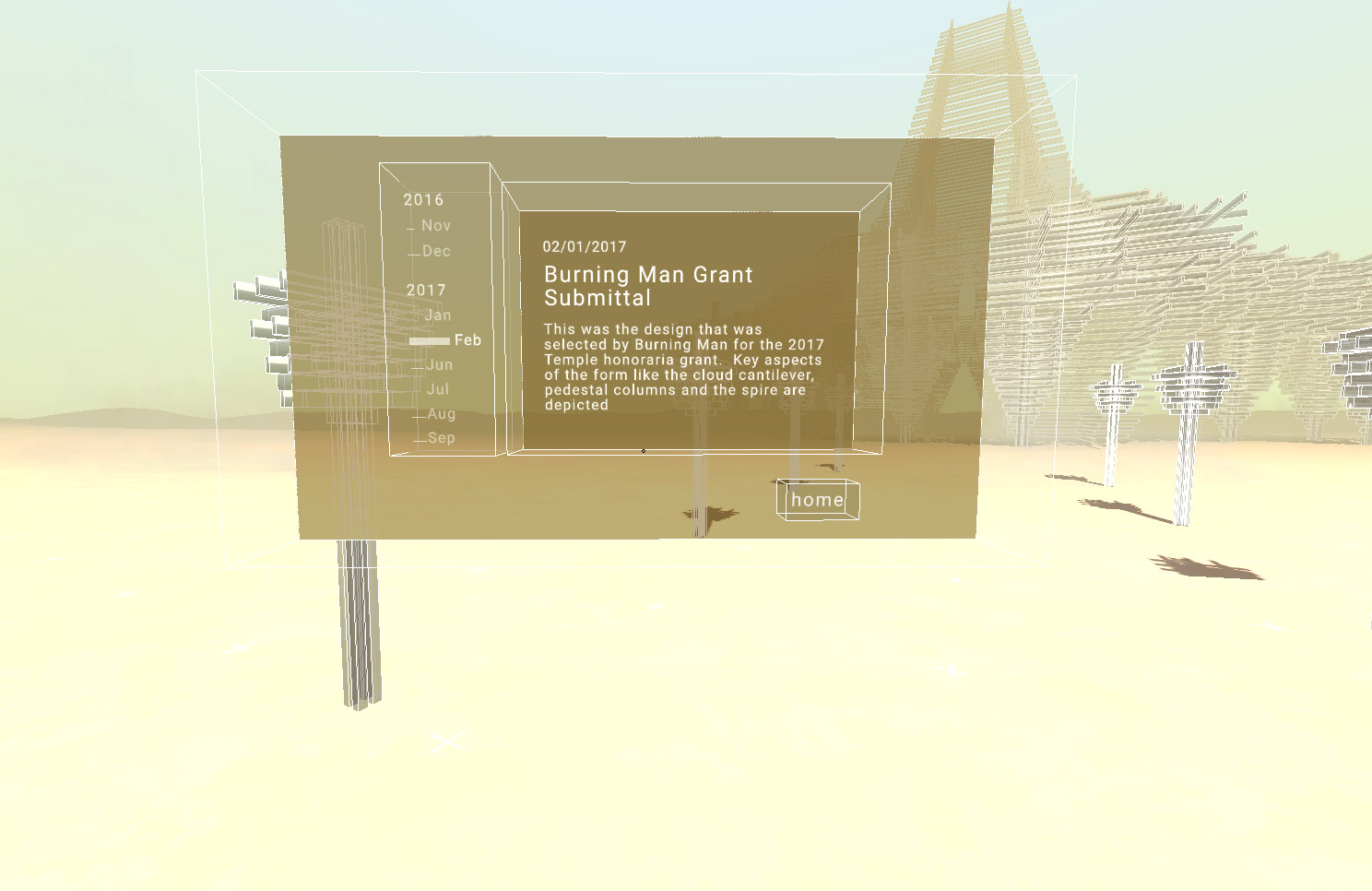

Design Goal 1 > Provide a meaningful experience through contextually relating content

I created a timeline component to help users understand the chronological relationships between content

This was used in both the model and 360 scenes to allow a user to navigate through co-located content over the course of the project.

Content associated with a specific location can only be accessed there; this gives users a sense of how all of the content fits together spatially.

This additional context exposes relationships which provide added layers of meaning.

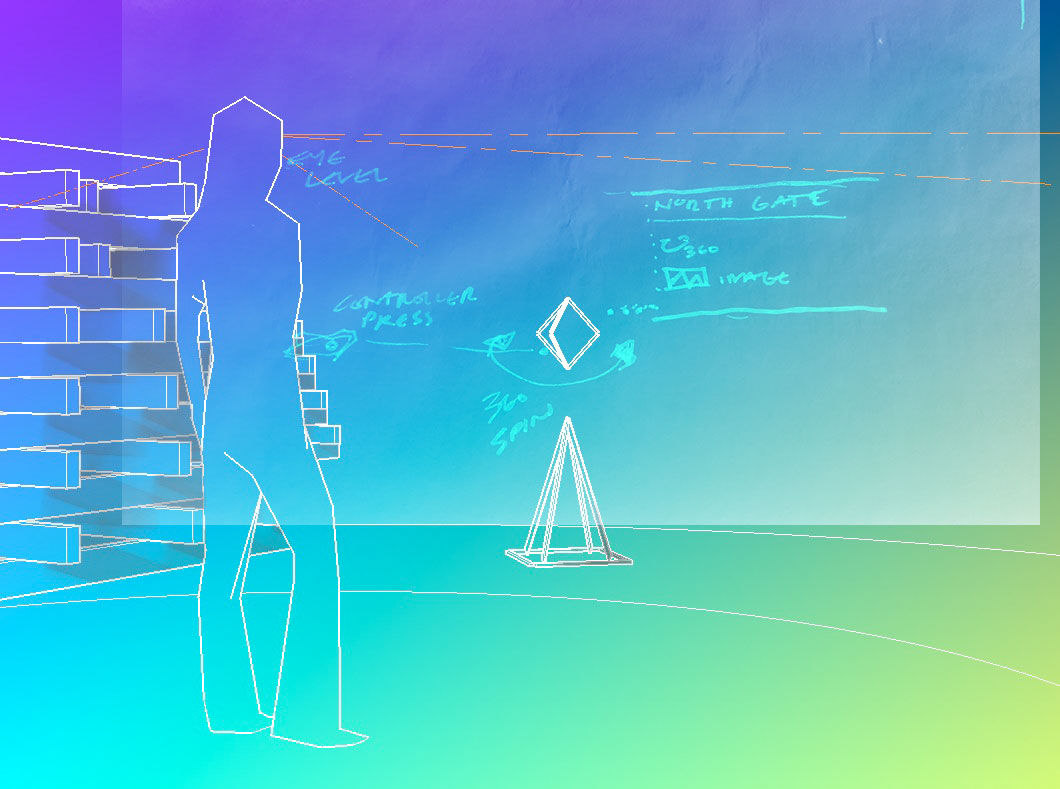

Design Goal 2 > Allow users to discover content organically

The mechanics of discovery and exploration make the experience more engaging.

Markers allow a user to see what content is available at a given place and time. These markers have to be found through exploring the model and adjusting the current point in the timeline. This creates more immersive experience akin to our real-world interactions.

Design Goal 3 > Make it accessible to the largest audience possible

Browser-based and Open-Source

In order to reach the broadest audience possible, I chose to implement the prototype using A-Frame, an open source WebVR framework. The initial prototype is VR device and controller agnostic (3DoF, 6DoF) and also supports keyboard and mouse interaction.

View the project code on Github >>>

Next steps

Onboarding, User testing, Adding support for unbuilt UI components, Adding support for 2d content, Voice overs, Multi-user support ... open to feedback and suggestions :)